Generative AI has been making waves in the tech industry, with its ability to create new content from scratch. It’s used in a variety of applications, from creating realistic images to composing music to writing text. If you’re a developer interested in this field, here are some frameworks and tools you should know about.

LangChain

LangChain is an open-source framework that simplifies the creation of applications using LLMs. It provides a standard interface for chains, lots of integrations with other tools, and end-to-end chains for common applications. It allows AI developers to develop applications based on the combined LLMs such as GPT-4 with external sources of computation and data.

What is LangChain?

LangChain is essentially a library of abstractions for Python and Javascript, representing common steps and concepts necessary to work with language models. These modular components—like functions and object classes—serve as the building blocks of generative AI programs.

LangChain follows a general pipeline where a user asks a question to the language model where the vector representation of the question is used to do a similarity search in the vector database and the relevant information is fetched from the vector database and the response is later fed to the language model. Further, the language model generates an answer or takes an action.

What are some use cases of LangChain?

LangChain can be used to build a wide range of LLM-powered applications. Some examples are:

- Document analysis and summarization: LangChain can be used to analyze documents and generate summaries based on them.

- Chatbots: LangChain can be used to build chatbots that interact with users naturally.

- Code analysis: LangChain can be used to analyze code and find potential bugs or security flaws.

- Answering questions using sources: LangChain can be used to answer questions using a variety of sources, including text, code, and data.

- Data augmentation: LangChain can be used to augment data by generating new data that is similar to existing data.

- Text classification: LangChain can be used for text classifications and sentiment analysis with the text input data

- Text summarization: LangChain can be used to summarize the text in the specified number of words or sentences.

- Machine translation: LangChain can be used to translate the input text data into different languages.

SingleStore Notebooks

SingleStore is a distributed, in-memory, SQL database management system designed for high-performance, high-velocity applications. One of its standout features is the SingleStore Notebook, which extends the capabilities of the Jupyter Notebook, enabling data and AI professionals to easily work and experiment.

What are SingleStore Notebooks?

SingleStore Notebooks are Jupyter notebooks that support development using SQL and Python. They are stored in either Shared or Personal “folders” in SingleStoreDB Cloud. Notebooks stored in the Shared area are available to any other users who have access to the same workgroup. Notebooks stored in the Personal area are only visible to the notebook creator and cannot be shared.

Key Features of SingleStore Notebooks

SingleStore Notebooks offer a range of features that make them a powerful tool for data professionals:

- Connect to Data Sources: SingleStoreDB Cloud supports internal and external data sources. Internal data sources are databases that exist within your workspace. An external data source could be an AWS S3 bucket, for example.

- Manage Cells: SingleStore Notebooks allow you to manage cells, which are units of code that can contain one or more lines of code or text. You can run a cell, manipulate cells, and manage cell inputs and outputs.

- Use Multiple Languages: SingleStore Notebooks support both Python and SQL cells. You can switch between Python cells and SQL cells by clicking on them or using keyboard shortcuts.

- Manage Libraries: SingleStore Notebooks come with preinstalled libraries and also allow you to install and import libraries.

- Magic Commands: SingleStore Notebooks support magic commands, which are special commands that are not part of the Python or SQL programming languages but are added by the Jupyter kernel to solve various problems.

LlamaIndex

LlamaIndex is an open-source framework that simplifies the creation of applications using LLMs. It provides a standard interface for connecting custom data sources to LLMs and generating knowledge-augmented responses. It allows AI developers to develop applications based on the combined LLMs such as GPT-4 with external sources of computation and data.

What is LlamaIndex?

LlamaIndex is essentially a library of abstractions for Python and Javascript, representing common steps and concepts necessary to work with language models. These modular components—like functions and object classes—serve as the building blocks of generative AI programs.

LlamaIndex follows a general pipeline where a user asks a question to the language model where the vector representation of the question is used to do a similarity search in the vector database the relevant information is fetched from the vector database and the response is later fed to the language model. Further, the language model generates an answer or takes an action.

Llama 2

Llama 2 is the next generation of large language models (LLMs) developed and released by Meta. It’s pre-trained on 2 trillion tokens of public data and is designed to enable developers and organizations to build generative AI-powered tools and experiences. This blog post will introduce you to the basics of Llama 2 and its potential applications.

What is Llama 2?

Llama 2 is an open-source large language model that outperforms other major open-source models like Falcon or MBT, making it one of the most powerful in the market today. It’s the Facebook parent company’s response to OpenAI’s GPT models and Google’s AI models like PaLM. But with one key difference: it’s freely available for almost anyone to use for research and commercial purposes.

Key Features of Llama 2

Llama 2 outperforms other open-source language models on many external benchmarks, including reasoning, coding, proficiency, and knowledge tests. Compared to ChatGPT and Bard, Llama 2 shows promise in coding skills, performing well in functional tasks but struggling with more complex ones like creating a Tetris game.

Applications of Llama 2

Llama 2 can be used in a variety of applications, including but not limited to:

- Document Analysis and Summarization: Llama 2 can analyze documents and generate summaries based on them.

- Chatbots: Llama 2 can be used to build chatbots that interact with users naturally.

- Code Analysis: Llama 2 can analyze code and find potential bugs or security flaws.

- Answering Questions: Llama 2 can answer questions using a variety of sources, including text, code, and data.

- Data Augmentation: Llama 2 can augment data by generating new data that is similar to existing data.

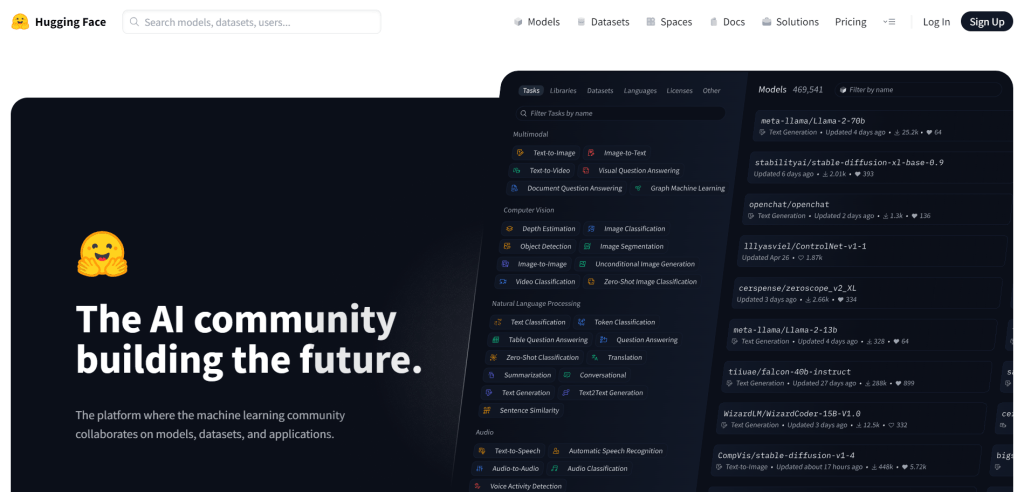

Hugging Face

Hugging Face is more than just an emoji; it’s a game-changer in the world of Natural Language Processing (NLP) and Artificial Intelligence (AI). It’s an open-source platform that acts as a hub for AI experts and enthusiasts. It’s like a GitHub for AI, where data scientists, researchers, and machine learning engineers converge to exchange ideas, seek support, and contribute to open-source initiatives.

What is Hugging Face?

Hugging Face is changing how companies use NLP models by making them accessible to everyone. It builds open-source libraries to support AI and machine learning projects and helps people and organizations overcome the vast costs of building Transformers. It’s a platform where the machine-learning community collaborates on models, datasets, and applications.

Key Features of Hugging Face

Hugging Face offers a plethora of features that make it a go-to platform for AI and NLP innovation:

- Models: Hugging Face hosts a wide range of models, including state-of-the-art models for text generation, text-to-image, image-to-text, text-to-video, visual question answering, document question answering, and many more.

- Datasets: Hugging Face provides access to a vast number of datasets, making it easier for researchers and developers to train and test their models.

- Libraries: Hugging Face has developed several libraries, including the popular Transformers library, which provides state-of-the-art machine-learning models for PyTorch, TensorFlow, and JAX.

- Community: Hugging Face has a vibrant community of data scientists, researchers, and machine learning engineers who contribute to the platform and support each other.

Applications of Hugging Face

Hugging Face can be used in a variety of applications, including but not limited to:

- Text Classification: Hugging Face models can be used to classify text into different categories.

- Token Classification: Hugging Face models can be used to classify individual tokens (words or phrases) in a text.

- Question Answering: Hugging Face models can be used to answer questions based on a given context.

- Translation: Hugging Face models can be used to translate text from one language to another.

- Summarization: Hugging Face models can be used to generate a summary of a given text.

- Conversational AI: Hugging Face models can be used to build chatbots and virtual assistants.

Haystack

Haystack is an open-source Python framework developed by Deepset for building custom applications with large language models (LLMs). It’s designed to be flexible and easy to use, allowing developers to quickly experiment with the latest models in natural language processing (NLP).

What is Haystack?

Haystack is a comprehensive tool for developing state-of-the-art NLP systems that use LLMs (such as GPT-4, Falcon, and similar) and Transformer models. With Haystack, you can effortlessly experiment with various models hosted on platforms like Hugging Face, OpenAI, Cohere, or even models deployed on SageMaker and your local models to find the perfect fit for your use case.

Key Features of Haystack

Haystack offers comprehensive tooling for each stage of the NLP project life cycle:

- Deployment of Models: Haystack allows effortless deployment of models from Hugging Face or other providers into your NLP pipeline.

- Dynamic Templates for LLM Prompting: Haystack provides dynamic templates for LLM prompting.

- Cleaning and Preprocessing Functions: Haystack offers cleaning and preprocessing functions for various formats and sources.

- Seamless Integrations with Document Stores: Haystack provides seamless integrations with your preferred document store (including many popular vector databases like Faiss, Pinecone, Qdrant, or Weaviate).

- Free Annotation Tool: Haystack offers a free annotation tool for a faster and more structured annotation process.

- Tooling for Fine-tuning a Pre-trained Language Model: Haystack provides tooling for fine-tuning a pre-trained language model.

- Specialized Evaluation Pipelines: Haystack offers specialized evaluation pipelines that use different metrics to evaluate the entire system or its components.

- Haystack’s REST API: Haystack provides a REST API to deploy your final system so that you can query it with a user-facing interface.

Applications of Haystack

Haystack can be used to build a wide range of applications:

- Semantic Search: You can perform a semantic search on a large collection of documents in any language.

- Generative Question Answering: You can perform generative question answering on a knowledge base containing mixed types of information: images, text, and tables.

- Natural Language Chatbots: You can build natural language chatbots powered by cutting-edge generative models like GPT-4.

- Information Extraction: You can extract information from documents to populate your database or build a knowledge graph.

Generative AI is a rapidly evolving field, and these tools represent just the tip of the iceberg. As a developer, familiarizing yourself with these tools and frameworks will provide a solid foundation as you dive into the world of generative AI.