Google has recently announced the introduction of Gemma, a new generation of open models designed to assist developers and researchers in building AI responsibly. This blog post will delve into the details of Gemma, its features, and its potential impact on the AI community.

Gemma, an open-source AI platform, followed Meta’s move. Gemma allows individuals and businesses to develop AI software using Google’s “open models.”

The company provides technical data, including model weights, for free, aiming to attract software engineers and promote its cloud division. While not fully open-source, Gemma offers opportunities for collaboration.

Google also collaborates with Nvidia to ensure Gemma models run smoothly on its chips, expanding accessibility to AI technology. Gemma models range from two to seven billion parameters.

What is Gemma?

Gemma is a family of lightweight, state-of-the-art open models built from the same research and technology used to create the Gemini models. Developed by Google DeepMind and other teams across Google, Gemma is inspired by Gemini, and the name reflects the Latin gemma, meaning “precious stone”.

Key Features of Gemma

Gemma comes with a host of features that make it a valuable tool for developers and researchers:

- Model Sizes: Gemma is available in two sizes: Gemma 2B and Gemma 7B. Each size is released with pre-trained and instruction-tuned variants.

- Responsible Generative AI Toolkit: This toolkit provides guidance and essential tools for creating safer AI applications with Gemma.

- Toolchains for Inference and Supervised Fine-Tuning (SFT): These are provided across all major frameworks: JAX, PyTorch, and TensorFlow through native Keras 3.0.

- Ready-to-use Colab and Kaggle notebooks: Integration with popular tools such as Hugging Face, MaxText, NVIDIA NeMo, and TensorRT-LLM makes it easy to get started with Gemma.

- Optimization across multiple AI hardware platforms: This ensures industry-leading performance, including NVIDIA GPUs and Google Cloud TPUs.

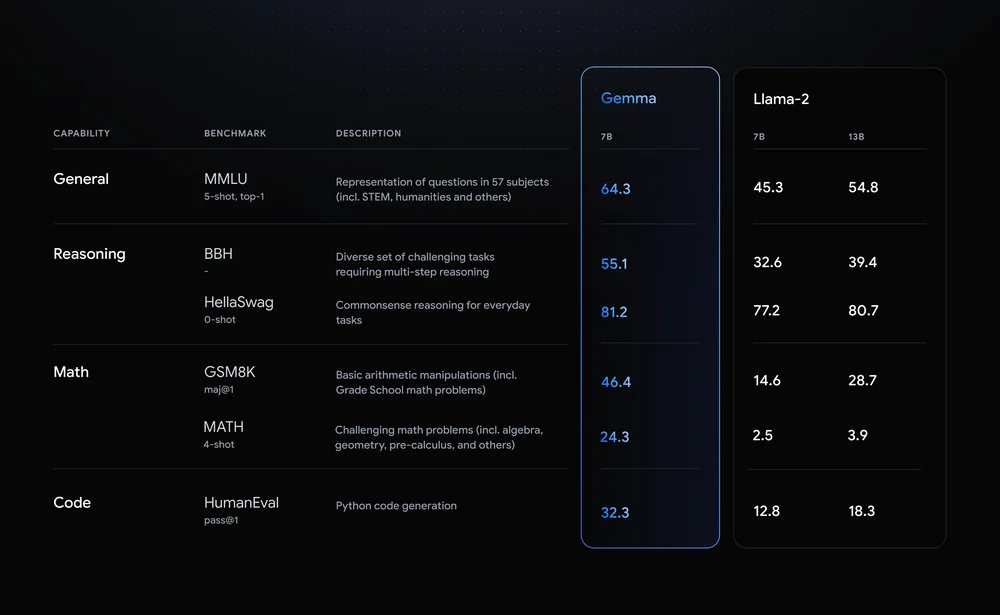

Gemma’s Performance

Gemma models share technical and infrastructure components with Gemini, Google’s largest and most capable AI model widely available today. This enables Gemma 2B and 7B to achieve best-in-class performance for their sizes compared to other open models. Notably, Gemma surpasses significantly larger models on key benchmarks while adhering to Google’s rigorous standards for safe and responsible outputs.

Responsible by Design

Gemma is designed with Google’s AI Principles at the forefront. As part of making Gemma’s pre-trained models safe and reliable, Google used automated techniques to filter out certain personal information and other sensitive data from training sets.

Free credits for research and development

Gemma is a platform designed for the open community of developers and researchers who are working on AI innovation. You can start using Gemma right away by accessing it for free on Kaggle, using the free tier for Colab notebooks, or redeeming $300 in credits for first-time Google Cloud users. Additionally, researchers can apply for Google Cloud credits of up to $500,000 to accelerate their projects.

Also read | Top 10 Cybersecurity trends to expect in 2025

Conclusion

The introduction of Gemma marks a significant step forward in the democratization of AI models. By providing a lightweight, state-of-the-art open model that adheres to responsible AI principles, Google continues to contribute to the open community and pave the way for future innovations in AI technology.